Parikshit Augmented Generation

Hello World!

This is the inaugural post of a monthly Series called Parikshit Augmented Generation, a riff on the popular Retrieval-Augment-Generation (RAG) framework, that connects LLMs to external knowledge sources. This will be a monthly post where I distill my thoughts on summaries generated by an AI-agent, which I programmed and trained on my personal library. This is a retrospective on what select tidbits caught my eye over a month: AI-summaries, bolstered by personal reflections1

Human brain pickings in an AI world

It would be a shame if I put in all this effort and sincerity just to subject you, dear reader, to AI slop. What I mean to do here is quite the opposite. This is a deeply personal piece of work I have built on top of my Notion database — Structure Function

Structure Function was set up as a personal library in Notion that curates information from all over the web. A newsletter of newsletters, an aggregator, if you will. This morphed into a full dataset: a wealth of information and knowledge that is gated, filtered, and stored based on my musings and interests. Every bit of material that goes into Structure Function is a deliberate decision, material I want to meditate on, and share with you.

Over the past couple of years, I have run simple Python scripts to analyze the sentiment of these and played around with the usual t-SNE/LDA-type topic models to understand the clustering of topics — an effort to have a one-step-removed understanding of my digital brain, and to study my archives en masse, at a library-scale, and not just at an article-scale. This proved to be a fun exercise, but nothing too exciting, and worthy of your attention.

Enter Parikshit-Augmented-Generation, a custom agent — Nimbe Weaver — I set up in NotionAI to summarize my monthly picks. The agent has a pre-set prompt set to pick “interesting” articles that caught my eye, compile, and summarize them. I then synthesize this monthly AI-retrospective with my thoughts.

You may ask, in a world where you can deep research your way into any topic, why does all this matter?

I would say this matters precisely because we live in a world where you can deep research your way into anything, and it has never been more important to plant your own flag and to make AI your own.

In an age of AI superintelligence, the unique shards of our irrational exuberance, the collective tribal knowledge from our neighborhoods and bubbles, and the visceral feelings that come with being human ought to be reflected on, and celebrated, and this is my way of doing so.

You may have heard of the famous “Principal-Agent” problem, where an Agent can act on their own behalf and be misaligned with the Principal’s needs. This blog is my attempt at building a Principal-Agent harmony for my corner of the internet, as I decode the world.

Okay, I’ve gone too meta, let’s get to the content of this month.

<Parikshit-Gen>

B(AI)o-bucks Much?

AI-workers x pharma org charts, pharma loves AI, superintelligence in a mercantilist world…

Wow! Seeing the number of articles on drug discovery I read last month, and that my agent picked up, was truly shocking. I would’ve imagined a lot more on the geopolitics of commodity flows through the Strait of Hormuz or investing in resilient supply-chain innovation would’ve shown up here, but guess not.

AI in drug discovery is a theme that has held steady over the years, more than a decade in, there’s no stopping the promise of cures and therapies, the hype of AI changing the game, and the harsh realities of regulatory and clinical failures.

Schrödinger (NASDAQ: SDGR), the OG in the space, reported a doubling of their drug discovery revenue between 2024 and 2025 (though drug discovery revenues are still not the majority driver of all revenue). A generous view here is that this shows how the pioneering computational docking tools that they built and put out in the market still stay relevant after all these years. Counterpoint here, though, is that their stock hasn’t performed handsomely, down 64% all time ( April 9, 2026).

And it’s not just tools, pipeline companies such as the Recursion Pharmaceuticals (NASDAQ RPRX) show how public markets have discounted the importance of discovery alone; it’s the pipeline and the progress on moving drugs to the clinic is what counts?

What’s interesting here is that, despite the stock performance of the two cases I picked above, deal-making seems to be flourishing in this area, especially with incumbents driving a lot of the deal-making. Eli Lilly is on a partnership spree. With their big deal with NVIDIA last year on making a supercomputer for drug discovery. Liquid AI and Insilico’s recent deal with Eli Lilly for on-prem deployment [ more on this below ] their interesting partnership in the space. It’s crazy when you start thinking about Eli Lilly’s windfall in obesity and how they go from this obesity to a token pipeline. Who would have thought these two vectors would feed into each other!

The public market disappointments so far not dissuaded VCs and markes from doing more. My agent picked up Isomorphic, which raised big bucks from VC in the space [ see below ] and Generate Bio [ S-1] went public earlier this year.

And it’s not just pharma. Tech seems to be taking on the Bio-Bucks playbook — NVIDIA announced a strategic partnership with Lumentum and another one with Coherent — a mix of upfront and milestone-driven pricing, which used to be the norm in biotech. Perhaps this signals an era where IP and model advantages will be tech transferred into big tech, just like it happened to new innovations in pharma and biotech. Who knows?!

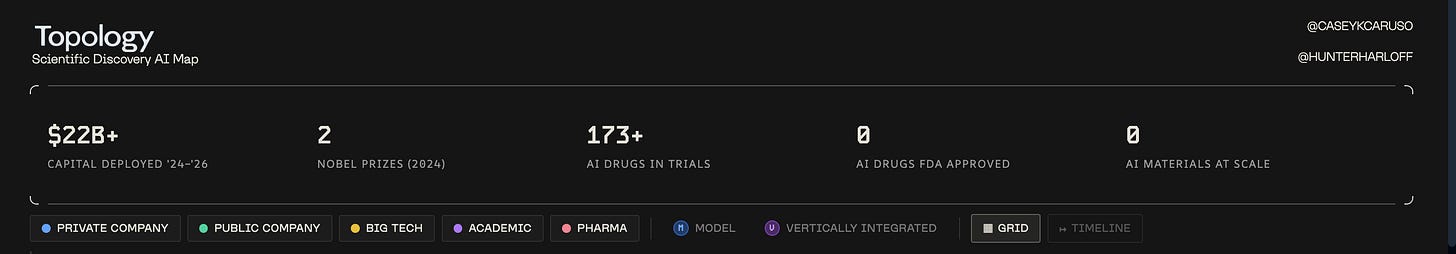

It shouldn’t be surprising that incumbents will use the best tools [ sidebar on incumbents and disruption] and they will continue buying the best tools. The buck will continue to stop at the pricing of the value that these tools create. Will the $22 billion in capital deployed in scientific discovery and increasing calls for building more data-generating labs for the world [link], and this pipeline of 173 drugs in trials [dive deeper], meet the same fate as the two stocks I showed above?

Almost a decade of drug discovery has only reinforced that discovery is the mere beginning; masterful development, manufacturing, and market-making is what it takes, and we now need to go from AI-accelerated discovery to AI-accelerated design of drugs, from in-silico hits to clinic-ready assets.

The more industry insiders I talk to, the more it seems they have had many wonder materials/drugs in the pipeline for decades. They used to be computer-aided-designed materials, which then became machine-learned materials, and now they are AI-generated materials.

Manufacturing and testing under regulation, trade wars, and bottlenecks haven’t been de-bottlenecked over all those tech leaps. This is not a hot take, but one that must be spoken about more and more to call to action: for more advances from AI-drug discovery to AI-drug development. This holds for AI-accelerated science at large.

Something that really jived with my thoughts on moving past AI discovery to AI-led development, and AI-led manufacturing by extension, was this Relentless podcast on manufacturing featuring Sam D'Amico, founder of Impulse, and Aaron Slodov, founder of Atomic Industries.

I found the framing of “tribal knowledge” that is locked in an industry and the importance of encoding tribal knowledge into our models to be brilliant, and found “Reinforcement Learning from Factory Feedback” an excellent term of art; he stuck with me this month. And to go back to this topic of AI in pharma, without belaboring my point any further, one realizes how there’s so much specialist wisdom and tactics locked behind closed doors and tight networks in pharma and regulated industry, which will have to get encoded into AI workflows if we want to push the paradigm from AI-discovery to AI-native development and manufacturing.

And now take a step back: with all this AI slowly taking over the industry and wanting to penetrate the physical world, I continued asking myself this month whether we’re now making things riskier by stacking token risk on top of commodity risk?

In other words, there is no doubt that physical AI and embodied AI is the next frontier, but is this a frontier that will be venture-backable? The quest for the right capital stack to match these accelerated tech stacks that we are producing is one that fascinates me deeply. I won’t be surprised that we will see emergent capital stacks that rise to finance and price innovation differently, given the pace at which we are coming up with new tools ?

In some commodity markets, you see different asset classes, like private equity’s stronghold in ag [ good read below ], but it remains to be seen if tech and “Reinforcement Learning from Factory Feedback” will disrupt trade practices that are so entrenched in the global economy. Copper One’s efforts in automating copper mining caught my eye. But we’ll see how far they go in terms of disrupting the industry. [ more below ].

Before this gets any longer, this month’s reading makes leaves me with a lingering thought: is the irony here that we are seeing a world where tech advances, imminent AI-super intelligence in everyone’s pocket, will accelerate, not dissipate mercantilism? And here, when I say mercantilism, I am referring to the old economic doctrine that led European nation-states to maximize exports and minimize imports, often creating trade monopolies. One could argue that allowing state-subsidized goods and services to flood the market violated the spirit of free trade and the charter of the World Trade Organization, so we were living in a tech-fueled quasi-mercantilist state all along. [Good related read]

Would a super-intelligent AI and post-money financial system [related read] allow mercantilism to take root? It seems too smart to let that happen?

Tell me if you agree:

</Parikshit-Gen>

Dive deeper into the topics I covered above:

<AI-Gen>

Parikshit Augmented Generation_VC — 2026-03

A March digest of what you added to VC Landscape & News. Themes this month: biotech financing and dealmaking, “AI for bio” platform maturation, and a cluster of essays mapping second-order effects of abundant intelligence and AI platform power.

Featured articles:

Liquid AI and Insilico Release Lightweight On-Premise AI Model for Drug Discovery

Summary

A collaboration positioned around a lightweight, on-premise-capable AI model for drug discovery, emphasizing enterprise deployment constraints (data privacy, security, compute locality) as much as raw performance.

Key points

Focus is on on-prem deployment, which can be a decisive requirement for pharma and regulated R&D.

Lightweight models can expand adoption by lowering infra burden and easing integration into secure environments.

Signals a market for “practical AI” packaging: deployment, governance, and workflow integration.

So what?

For AI-bio, the next competition may be less “best model” and more “best deployable system.” On-prem and hybrid architectures can be a wedge, especially where data movement is the blocker.

Biotech’s top money raisers: 2025

Summary

A look at the largest private biotech financings of 2025, highlighting a market that preferred fewer, larger rounds and later-stage assets. A notable signal is how much capital flowed to obesity/metabolic programs and to AI-native drug discovery platforms.

Key points

2025 featured fewer but larger venture rounds; the top 10 rounds were nearly $4B combined.

Isomorphic Labs and Kailera each raised $600M (the year’s top financings), reflecting investor comfort with large checks when platform and market opportunity are clear.

Obesity remained a capital magnet, with multiple large rounds and programs advancing toward Phase 3.

AI-enabled drug discovery showed up as an explicit driver of investor enthusiasm and partnership value.

A “modest rebound” narrative sets expectations for continued late-stage momentum into 2026.

So what?

For deal sourcing and thesis work, the bar for “fundable” appears to be either:

clear path to large markets with late-stage readiness, or

platform stories with credible proof that the platform can generate clinical assets and partnering leverage.

Generate Biomedicines S-1/A

Summary

Generate positions itself as a clinical-stage “generative biology” company building an integrated design–build–test–learn platform. The filing emphasizes intentionality at scale: generative models plus high-throughput biohardware to generate proprietary data and translate computationally designed proteins into the clinic.

Key points

Platform integrates generative and predictive models with scalable biohardware and high-throughput assays.

Claims multiple computationally engineered proteins in human testing.

Lead program GB-0895 (anti-TSLP mAb) is in pivotal Phase 3 trials for severe asthma with an intended Q26W dosing interval.

Highlights capacity investments (e.g., Cryo-EM output) as part of the proprietary data flywheel.

Frames a modular capability approach that can be reused across modalities and targets.

So what?

The “AI for bio” story increasingly looks like an execution story: the durable advantage may be in integrated wet-lab throughput, assay miniaturization, and proprietary datasets that improve the model loop, not in model architecture alone.

Scientific Discovery AI Map | Topology Research

Summary

A landscape-style map of companies and approaches in AI-enabled scientific discovery, useful as a navigation aid for “who exists where” across the stack.

Key points

Provides a structured view of the scientific AI ecosystem.

Can be used to spot clustering, whitespace, and repeated patterns in company positioning.

So what?

Use this as a lightweight reference for diligence and sourcing: when you see a new company, you can quickly place it in the broader topology and identify nearby competitors or complements.

The world needs an AI lab — for Data - by Bobby Samuels

Summary

A case for treating high-quality data and the pipelines that create it as a first-class, institutional capability, analogous to how labs create scientific knowledge. The framing is that model progress increasingly depends on systematic data generation and governance.

Key points

Data is framed as the limiting factor for many practical AI deployments.

Suggests an “AI lab for data” as a repeatable way to generate, curate, and validate datasets.

Implies organizational design matters: measurement, incentives, and stewardship.

So what?

This is aligned with the “verification and ground truth” theme: competitive advantage can come from building a durable data factory, not just buying models.

Incumbents Kill Startups. Sometimes. - by Fabian Hediger

Summary

A playbook for how platform incumbents copy, bundle, and reprice downstream categories, with the AI labs increasingly acting like platforms because they own distribution through the assistant interface. The piece distinguishes “feature-shaped” startups from “workflow owners” and maps outcomes beyond the simplistic “they copy you, you die.”

Key points

AI assistants are becoming the interface layer, letting model providers move into apps quickly.

Five mechanisms: bundling, self-preferencing, platform rule changes, cross-subsidy/price compression, and data advantage.

Six outcomes: collapse, pivot, acquisition, failed copy, regulation-driven reopening, and coexistence.

Core takeaway: the biggest risk is building something that can be replicated as a bundled feature.

So what?

As an investing lens: prefer companies that own a system of record, permissions, compliance, auditability, and deep integrations. Expect baseline capabilities to get bundled fast; differentiation migrates to responsibility, trust, and workflow depth.

The unraveling of EQRx’s low-cost drug dream

Summary

EQRx tried to build a “low-cost drug” biotech by pairing a pipeline of mostly ex-China assets with a “Global Buyers Club” meant to guarantee demand at lower prices. The effort unraveled when U.S. regulatory requirements (especially around representative trial populations and survival endpoints) raised the cost and timeline, eroding the economic thesis and triggering a long strategic review that ultimately ended in a cash-focused acquisition.

Key points

Public debut via SPAC at roughly $4.2B valuation; five clinical-stage programs across oncology and immune-inflammatory disease.

Lead assets included sugemalimab (PD-L1) and aumolertinib (EGFR); FDA pushed for additional Phase 3 evidence in diverse U.S. populations and survival outcomes.

“No commercially viable path” for sugemalimab in U.S. NSCLC was disclosed; strategy shifted toward market-based pricing for remaining U.S. programs.

Broad outreach to potential acquirers and partners yielded limited engagement; out-licensing attempts also stalled.

End state was an acquisition that primarily valued EQRx’s cash, not its pipeline.

So what?

The story is a reminder that “cost disruption” in biotech is often bounded by regulatory and evidentiary requirements. Any thesis that relies on external clinical data or faster/cheaper paths needs an explicit plan for U.S.-grade confirmatory evidence, and a financing plan that survives when timelines extend.

The US vs. China Manufacturing Debate - YouTube

Summary

A discussion on manufacturing competitiveness and the U.S.–China dynamic, likely emphasizing how policy, scale, supply chains, and industrial strategy shape outcomes.

Key points

Manufacturing advantage is not only labor cost; it is also scale, iteration speed, and supply chain density.

Industrial policy and national security concerns increasingly shape capital allocation.

So what?

For deep tech and hardware theses, the relevant question is less “where to manufacture” and more “how to build an end-to-end learning loop that can iterate fast while managing geopolitical and supply chain risk.”

Copper One: The World’s Only Autonomy-First Mine & Refinery

Summary

A thesis-style profile of building a mine and refinery designed around autonomy from day one, implying a future where extraction and refining are increasingly software-defined operations.

Key points

Autonomy-first design suggests rethinking unit economics, safety, and throughput.

Treating mining/refining as an integrated system can change capex, opex, and staffing assumptions.

So what?

If autonomy can be a first-principles design constraint (not a bolt-on), it can create a step-function improvement in cost and safety. The investment question is what parts are defensible: data, operational know-how, and integrated deployment capability.

GRAIN | Barbarians at the barn: private equity sinks its teeth into agriculture

Summary

An argument that private equity’s expansion into agriculture reshapes incentives and ownership, with implications for farmers, pricing, consolidation, and the resilience of food systems.

Key points

PE strategies can prioritize financial engineering and short-horizon returns over long-term agricultural resilience.

Consolidation can shift bargaining power away from farmers and toward capital owners.

Ownership and control over critical parts of the ag value chain can centralize risk.

So what?

If you are tracking food/ag investing: this provides a “political economy” lens for where regulatory pushback and social risk may increase, and where alternative ownership models or long-horizon capital could differentiate.

THE 2028 GLOBAL INTELLIGENCE CRISIS

Summary

A scenario narrative about a macro regime where AI-driven labor displacement creates “Ghost GDP” (measured output that does not circulate through households), triggering feedback loops across consumption, mortgages, private credit, and government fiscal capacity.

Key points

Depicts a negative feedback loop: headcount cuts fund more AI spend, which enables more headcount cuts.

Highlights fragility in business models based on intermediation and friction (SaaS pricing, payments interchange, consumer subscriptions).

Argues displacement concentrates in higher-income white-collar segments, hitting discretionary spending disproportionately.

Connects labor shifts to systemic risk in private credit and insurance-linked “permanent capital” structures.

Suggests policy constraints as tax bases tied to human labor shrink.

So what?

Useful as a stress-test lens for portfolios and theses that assume stable labor income growth. Even if the details are wrong, it clarifies where second-order risks might concentrate: consumer demand, credit underwriting assumptions, and “friction toll booth” business models.

Some Simple Economics of AGI

Summary

An economics framework arguing that as AI drives the cost of execution toward zero, the binding constraint shifts to human verification bandwidth. The paper frames future advantage around observability, ground-truth generation, and liability underwriting rather than raw model capability.

Key points

Two racing cost curves: cost to automate falls rapidly, while cost to verify is constrained by human time and feedback latency.

As execution becomes abundant, verification and trust become scarce, creating incentives for unverified deployment.

Risks compound via the “missing junior loop” (eroding apprenticeship) and “codifier’s curse” (experts turning experience into training data).

Predicts a shift from skill-biased to measurability-biased technical change.

Emphasizes “liability-as-a-service” and provenance as durable moats.

So what?

For investing and operating, this points toward picks-and-shovels in verification:

observability tooling,

evaluation and monitoring infrastructure,

provenance/traceability,

business models that sell warranted outcomes rather than tool access.

</AI-Gen>

All views in this page are my own and do not represent the views of my employer: SOSV SF or any SOSV affiliates. This newsletter is provided for informational purposes only, and should not be relied upon as legal, business, investment, or tax advice. All work is public information, and no confidential information is made available here. Again, this site is for informational purposes only.